Sometimes really rich passages have so much going on that we miss some of the codes that should apply. There are other reasons we miss things to. You've been reading along thinking about what you'll be having for dinner, and suddenly realize you have no idea what you've just read. If there's anything I've learned over the years it's that all analysts miss things. But this passage might generate discussion around whether the code should also capture instances where participants reconcile the sense of division and embrace both sides of themselves. The code definition we discussed earlier had primarily negative connotations. For instance, in Deci, you might find that only one analyst applied the code being in between to the following segment.

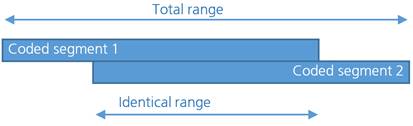

Remember, you may need to discuss what you mean for the code to capture in order to meet your project objectives. If you have different interpretations of the data or ideas about whether the code applies, work to resolve them, making changes to the code definitions as needed. If you both agree that it should apply, check the definition to see if you need to make any adjustments. But where only one coder applied the code, you want to read the text together and discuss whether you think the code should be applied there. Hopefully, you'll see many instances where both applied a particular code. I recommend going one code at a time and reviewing every instance where either analyst applied the code. I just want to be sure that both analysts are capturing the key nuggets. I usually ignore differences in segmentation at this stage. Then you want to combine the two coded data sets so you can see both analysts work on a single transcript. Both analysts should code the same transcripts usually two to three depending on their length. But let me walk you through the process here. In the next max QDA video, I'll walk you through the mechanics of comparing code application with the software. If at all possible, I recommend doing this with another analyst because they'll bring new insight to the data as they will likely see it through a slightly different lens. Just be sure to give yourself some time between the first and second time, and use a clean data set each time so you won't be tempted to see what you did before. If you don't have a second person to work with, you can also code the same data twice yourself. In row, the (no) concordances are added together, so that an average percentage of match can be calculated.Once your code book is fairly well-developed, one of the best ways to refine and finalize your codes and definitions is to have two separate analysts code a small subset of your data, and assess how well they agreed on the application of codes. Each code shows the total number of coded segments (total column), the number of matches (chords), and the percentage of code-specific agreement. It also indicates where the weak points are, that is, the codes do not get the desired percentage overcost. The table provides an overview of the correspondences (agreements) and disagreements (disagreements) on code assignments between the two codifiers. This table contains as many rows as the number of codes included in the intercoder tuning test.Ĭodes that have not been assigned to either coders are ignored. However, this percentage is provided by MAXQDA. In other words, the actual percentage of concordance is not the most important aspect of the tool. In the field of qualitative research, the goal of comparing independent programmers is to discuss differences, determine why they occurred, and learn from the differences in order to improve coding agreement in the future. The MAXQDA Intercoder Agreement function allows you to compare two people encoding the same document independently of the other. They assume, for example, that the coding is not arbitrary or random, but that a certain degree of reliability is achieved. When assigning codes to qualitative data, it is recommended to define certain criteria. The following dialog box appears where you can customize the intercoder agreement verification settings. This is usually not substantively relevant, but can lead to an unnecessarily low percentage of concordance and “false” non-agreements if the coding is absolutely identical. B because a person has coded a word more or less. Often, programmers easily deviate when assigning codes, for example. Depending on the parameter selected, the table contains the segments of both encoders or only those of an encoder and indicates whether the second encoder has assigned the same code to that location. The second table makes it possible to precisely check the intercoder agreement, that is, to determine for which coded segments the two encoders do not match.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed